多项式回归:深入理解欠拟合与过拟合

本文通过Python和PyTorch实现多项式回归,详细展示了模型在不同阶数下的正常拟合、欠拟合和过拟合现象。旨在帮助读者理解机器学习中模型复杂度与泛化能力的关系。

- 深度学习

- 机器学习

多项式回归

import math

import numpy as np

import torch

from torch import nn

from d2l import torch as d2l- 生成数据集(噪声项服从均值为0,标准差为0.1的正态分布)

# 多项式的最大阶数

max_degree = 20

# 训练和测试数据集的大小

n_train,n_test = 100,100

# 分配大量空间

true_w = np.zeros(max_degree)

# 只有前四位作为多项式模型的真实权重

true_w[0:4] = np.array([5,1.2,-3.4,5.6])

# 生成样本特征 shape(n_train+n_test,1),均值为0方差为1

features = np.random.normal(size=(n_train+n_test,1))

# 打乱特征顺序

np.random.shuffle(features)

# 生成[0,1,2,3...19]==>二维数组,

# [[0,0^1,0^2,....0^19],

# [1,1^1,1^2,....1^19],

# [2,2^1,........2^19],

# ...

# [199,199^1,199^2.....199^19]]

# 每一列都是features的一个幂次

poly_features = np.power(features,np.arange(max_degree).reshape(1,-1))

# 对每一列进行归一化

for i in range(max_degree):

poly_features[:,i] /= math.gamma(i+1) # gamma(i) = (i-1)!

# (200,20)* (20,1)==> (200,1)

labels = np.dot(poly_features,true_w)

# 添加标准差为0.1的正态分布噪声

labels += np.random.normal(scale=0.1,size=labels.shape)- 对模型进行训练和测试

class Accumulator:

def __init__(self,n):

self.data = [0.0]*n

def add(self,*args):

self.data = [float(a) + b for a,b in zip(args,self.data)]

def reset(self):

self.data = [0.0]*len(self.data)

def __getitem__(self,idx):

return self.data[idx]

def evaluate_loss(net, data_iter, loss):

"""评估给定数据模型的损失"""

metric = d2l.Accumulator(2) # 损失的总和,样本数量

for X, y in data_iter:

out = net(X)

print("Out: ",out)

y = y.reshape(out.shape)

print("y: ",y)

# 返回包含每个样本损失的张量

l = loss(out,y)

print("loss",l)

# 将每批数据的损失总和和样本数量添加到metric

metric.add(l.sum(),l.numel())

# 返回样本平均损失

return float(metric[0] / metric[1])true_w, features, poly_features,labels = [torch.tensor(x,dtype=torch.float) for x in [true_w,features,poly_features,labels]]

features[:2],poly_features[:2,],labels[:2]/var/folders/9h/5v78_tks5tgf6pq1qk_3bcfh0000gn/T/ipykernel_36836/3061289264.py:1: UserWarning: To copy construct from a tensor, it is recommended to use sourceTensor.clone().detach() or sourceTensor.clone().detach().requires_grad_(True), rather than torch.tensor(sourceTensor). true_w, features, poly_features,labels = [torch.tensor(x,dtype=torch.float) for x in [true_w,features,poly_features,labels]]

(tensor([[-1.1872], [-0.3378]]), tensor([[ 1.0000e+00, -1.1872e+00, 7.0472e-01, -2.7888e-01, 8.2772e-02, -1.9653e-02, 3.8887e-03, -6.5953e-04, 9.7874e-05, -1.2911e-05, 1.5327e-06, -1.6542e-07, 1.6366e-08, -1.4946e-09, 1.2674e-10, -1.0031e-11, 7.4431e-13, -5.1979e-14, 3.4283e-15, -2.1421e-16], [ 1.0000e+00, -3.3782e-01, 5.7062e-02, -6.4255e-03, 5.4267e-04, -3.6665e-05, 2.0644e-06, -9.9627e-08, 4.2070e-09, -1.5791e-10, 5.3346e-12, -1.6383e-13, 4.6121e-15, -1.1985e-16, 2.8920e-18, -6.5133e-20, 1.3752e-21, -2.7328e-23, 5.1288e-25, -9.1191e-27]]), tensor([-0.3680, 4.4499]))

def accuracy(y_hat,y):

"""计算预测正确的数量"""

if len(y_hat.shape) > 1 and y_hat.shape[1] > 1:

y_hat = y_hat.argmax(1)

cmp = y_hat.type(y.dtype) == y

return float(cmp.type(y.dtype).sum())

def train_epoch_ch3(net, data_iter, loss, updater):

if isinstance(net, torch.nn.Module):

net.train()

metric = Accumulator(3)

for X, y in data_iter:

out = net(X)

l = loss(out,y)

if isinstance(updater, torch.optim.Optimizer):

updater.zero_grad()

l.mean().backward()

updater.step()

else:

l.sum().backward()

updater(X.shape[0])

metric.add(float(l.sum()),accuracy(out,y),y.numel())

return metric[0] / metric[2],metric[1] / metric[2]

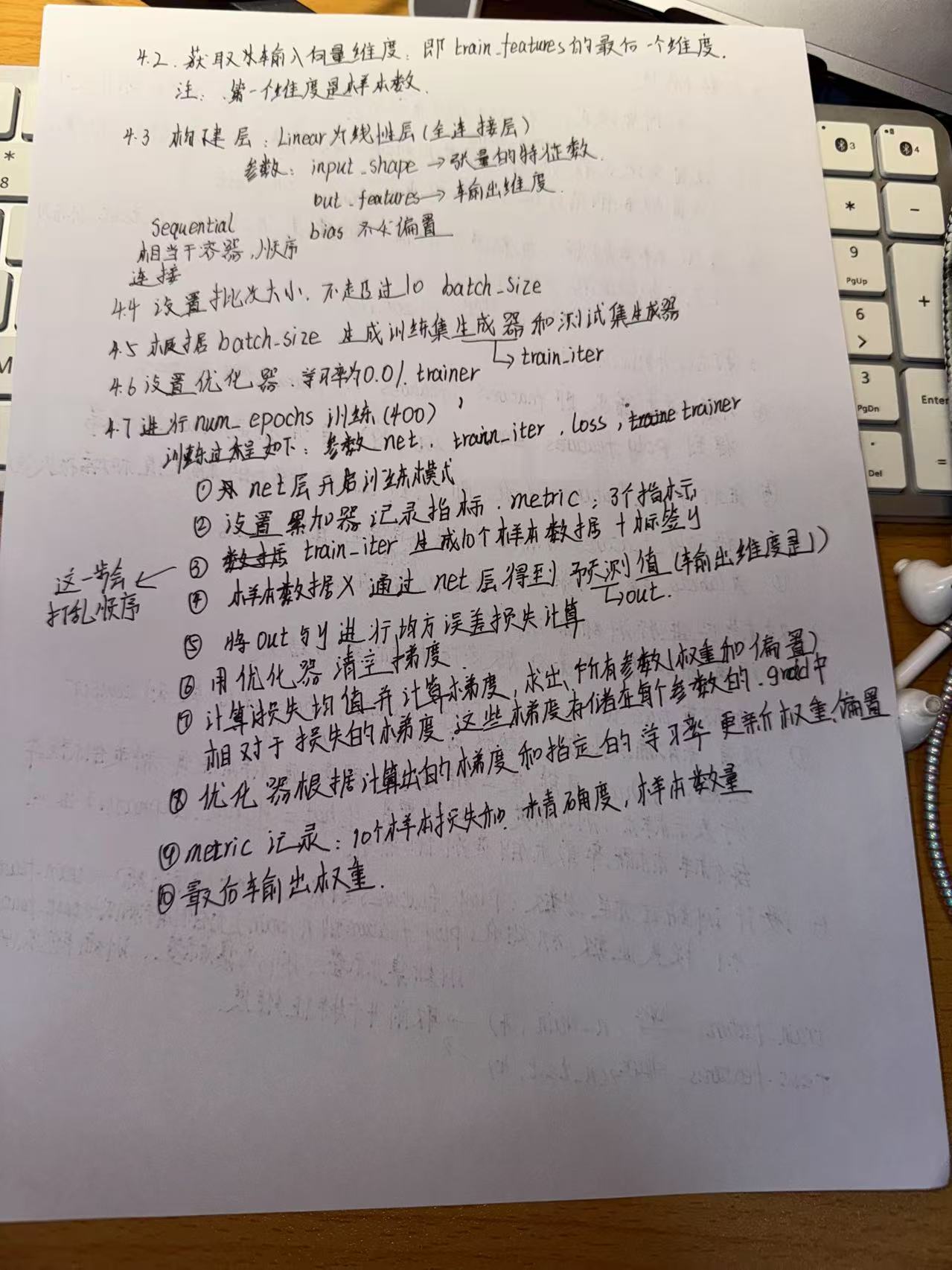

def train(train_features,test_features,train_labels,test_labels,num_epochs=400):

loss = nn.MSELoss(reduction='none')

input_shape = train_features.shape[-1]

net = nn.Sequential(nn.Linear(input_shape,1,bias=False))

batch_size = min(10,train_labels.shape[0])

train_iter = d2l.load_array((train_features,train_labels.reshape(-1,1)),batch_size)

test_iter = d2l.load_array((test_features,test_labels.reshape(-1,1)),batch_size,is_train=False)

trainer = torch.optim.SGD(net.parameters(),lr=0.01)

animator = d2l.Animator(xlabel='epoch',ylabel='loss',yscale='log',xlim=[1,num_epochs],ylim=[1e-3,1e2],legend=['train','test'])

for epoch in range(num_epochs):

train_epoch_ch3(net,train_iter,loss,trainer)

if epoch == 0 or (epoch+1) % 20 == 0:

print("epoch: ",epoch)

print(evaluate_loss(net,train_iter,loss))

animator.add(epoch + 1,(evaluate_loss(net,train_iter,loss),evaluate_loss(net,test_iter,loss)))

print('weight: ', net[0].weight.data.numpy())- 三阶多项式函数拟合(正常)

# 选择前四个特征维度

train(poly_features[:n_train,:4],poly_features[n_train:,:4],

labels[:n_train],labels[n_train:],num_epochs=400)weight: [[ 5.018691 1.2304041 -3.4126675 5.511929 ]]

- 线性函数(欠拟合)

train(poly_features[:n_train,:2],poly_features[n_train:,:2],labels[:n_train],labels[n_train:],num_epochs=400)

-

高阶多项式函数拟合(过拟合)

train(poly_features[:n_train,:],poly_features[n_train:,:],labels[:n_train],labels[n_train:],num_epochs=1500)

留言讨论

0 条留言

正在加载留言...